3D Touch Technology Could Heighten Interaction with Devices

February 3, 2016

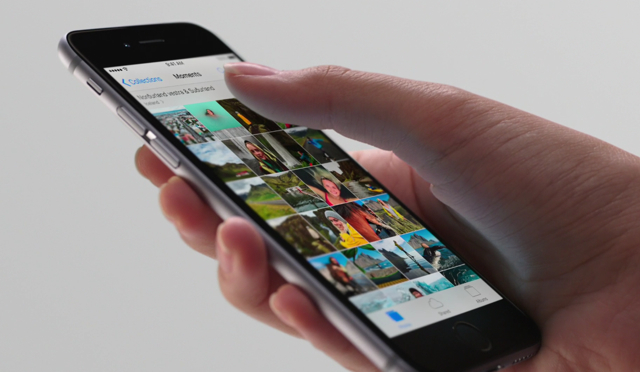

Sensory technology could soon allow smartphones and other devices to interact with humans through touch. Apple’s 3D Touch on the iPhone 6s is one of the most recent developments to hit the market. It allows the iPhone 6S to detect how hard the user is pressing on the screen and send feedback to the user via vibrations. In the future, sensory technology could have a variety of applications such as adding another dimension to gaming, photos, social media and any kind of user interface.

The 3D Touch and Taptic Engine technology behind Apple’s iPhone 6S is only the beginning. Companies like Disney and Microsoft have also been experimenting. Disney is working on touchscreens that have a dynamic surface. These screens adjust their surface friction in real time.

According to TechCrunch, “Microsoft has even created a 3D MRI, where pressing harder displays a deeper cross-section of the scan.” South Korean researchers are utilizing sensory technology to mimic flipping through a book. The force of the user’s touch determines how many pages of the e-book are flipped.

This technology is important because it can help make digital experiences feel like real world actions. That offers plenty of new opportunities for the visually impaired to use digital technology. For example, Phorm makes a touchscreen that can morph into a keyboard with pop-up finger guides. Even for people with sight, touch is an important, instinctual part of interacting with the world.

The possibilities for using sensory technology to improve human-computer interaction are limitless. Search results could have different textures based on how relevant or trustworthy they are. Photos could have texture. Online shoppers could touch something before they buy it. Tactile feedback could help gamers find their way through a mystical world. And sensory technology could make all interfaces more like the real world.

No Comments Yet

You can be the first to comment!

Sorry, comments for this entry are closed at this time.