Adobe’s AI-Enabled System Could Replace Greenscreen Tech

March 27, 2017

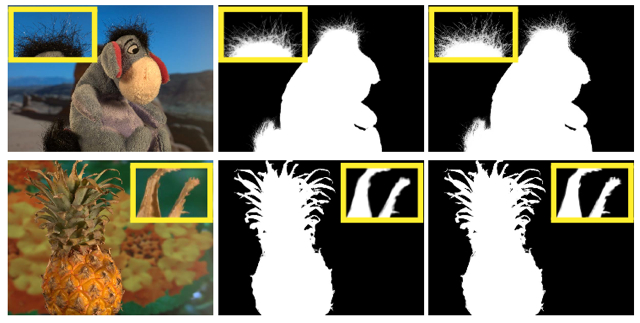

The traditional bluescreen/greenscreen method of extracting foreground content from the background for film and video production may be on its way out. That’s due to research that Adobe is doing in collaboration with the Beckman Institute for Advanced Science and Technology, to develop a new system that relies on deep convolutional neural networks. A recent paper, “Deep Image Matting,” reports that the new method uses a dataset of 49,300 training images to teach the algorithm how to distinguish and eliminate backgrounds.

The Stack notes that, “Adobe has been at the forefront of this field for at least 27 years.” In the early days of extracting actors or miniatures from their backgrounds, these elements were always shot in front of a flat field of color.

Walt Disney Studios originally used a yellow backdrop and a sodium-based process, but this never caught on outside the studio. Although blue was used for some time, eventually green was adopted, “since it was proved to be present in less foreground material than blue.” (The Stack reports that Christopher Reeve had to wear a near-violet Superman costume so he didn’t disappear in the bluescreen.)

Adobe’s flagship Photoshop was created by visual effects supervisor John Knoll at Industrial Light & Magic with his brother Thomas. In the late 1980s, they “pioneered the digital alpha matte,” and later, computer-generated imagery paired with compositing revolutionized the VFX field. The technique was integrated with many visual effects software programs, including After Effects.

“The prospect of casual background removal via the use of neural networks seems likely to be a game-changer not only for the VFX industry but also for the much more potentially lucrative VR/AR sphere,” says The Stack, which adds that, with regard to any real-time applications, “latency will be a critical issue in any AI-driven approach to foreground extraction.”

Deep Image Matting is available online.

No Comments Yet

You can be the first to comment!

Sorry, comments for this entry are closed at this time.