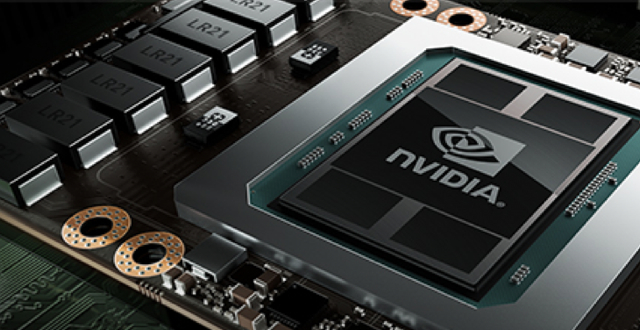

Nvidia has entered the field of artificial intelligence with the debut of its Tesla P100 chip, which contains 15 billion transistors — about twice as many as its previous high-end graphics processor and, says Nvidia chief executive Jen-Hsun Huang, the largest chip ever made. Nvidia is creating the DGX-1 computer with eight Tesla P100 chips and AI software; computers from third parties integrating the chip are expected to be on the market by next year. Huang hints its first use is likely to be for cloud computing services.

The Wall Street Journal quotes Huang as saying the new DGX-1 computer, which will cost $129,000, will be able to handle AI tasks “as rapidly as 250 servers powered by general-purpose chips like Intel’s” for a fraction of the cost. As an example, says Huang, a task that would take 150 hours on one standard server would take two hours on the DGX-1.

The new chip and computer is the result of Nvidia’s multi-year work into finding uses besides videogames for its chips based on graphic processing units (GPUs), which “feature hundreds of simple processors — compared with between one and 22 large calculating engines found on a typical microprocessor.”

One of the first customers for the DGX-1 system, says Huang, is Massachusetts General Hospital, which will use the systems’ machine learning capabilities to “analyze around 10 billion medical images to help study disease and devise new treatments.” Nvidia already has expertise in machine learning for autonomous vehicles; participants in Roborace, an event that tests autonomous sports cars, will use Nvidia’s hardware.

Machine learning is a focus for other companies, including IBM, which, in 2014, debuted a chip “designed to function more like the human brain than other processors.”

Moor Insights & Strategy analyst Patrick Moorhead believes that, despite competition from other companies that debut new chips or use chips “that can be electrically configured after they leave the factory,” Nvidia is likely to “have an impact on fields where speed is important,” since Nvidia can train the system in hours rather than days.

Huang is also directing the company into virtual reality, demonstrating a new Iray technology “that uses hardware in data centers to help generate 3D landscapes that look much more like photographic imagery than today’s VR systems.”

No Comments Yet

You can be the first to comment!

Sorry, comments for this entry are closed at this time.