Microsoft Project Oxford Updates Could Bring AI to More Apps

November 12, 2015

Following announcements that Google is releasing its TensorFlow machine learning platform so developers can create their own artificial intelligence programs, and Nvidia has made a significant update to its Jetson TX1 supercomputer-on-a-chip, Microsoft is the latest with major AI news. The company has updated its Project Oxford suite of AI tools with powerful new features and programs designed to identify human emotions and voices, for example, that could make their way into the apps we use on a daily basis.

Notably, a series of new APIs could help software developers create their own apps with AI features.

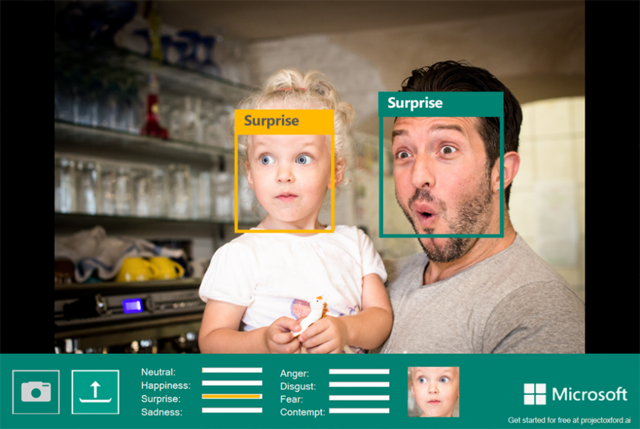

Microsoft has posted beta versions of APIs on the Project Oxford site based on categories such as Computer Vision, Face, Emotion, Speech, Spell Check and Language Understanding Intelligent Service (LUIS). The Project Oxford site also features a number of compelling demos and SDKs for Android, iOS, and Windows.

“Microsoft mainly hyped its Emotion API, which uses machine learning to recognize eight states of emotion (anger, contempt, fear, disgust, happiness, neutral, sadness, or surprise), based on facial expressions,” reports Popular Science.

“The Emotion API is available today, and was debuted earlier in the week in MyMoustache, a moustache-identifying Web application for the Movember charity.”

The company imagines a wide range of applications involving ways to measure customer reactions, a reactive messaging app, tools for learning popular expressions, identifying what people are saying in a crowded room — even a Video API for automatic editing based on detection of faces, motion and shaky camera movement.

“While Google’s TensorFlow platform is targeted towards the researcher and programmer, Microsoft is aiming for the app developer with focused, easy APIs,” notes Popular Science. “With easier tools like this, more mainstream apps that aren’t backed by multi-billion dollar companies will be able to integrate deep learning and artificial intelligence into their apps.”

No Comments Yet

You can be the first to comment!

Sorry, comments for this entry are closed at this time.