Carnegie Mellon Computer Can Teach Itself Common Sense

November 25, 2013

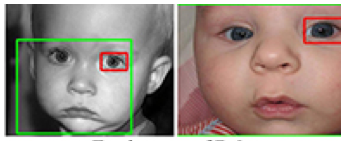

The Never Ending Image Learner (NEIL), a computer program at Carnegie Mellon, searches the Web for images and tries to understand them in order to grow a visual database and gather common sense. This program is part of recent advances in computer vision where computer programs are able to identify and label objects in images, as well as recognize attributes such as color and lighting. This data will help computers comprehend the visual world.

“But NEIL also makes associations between these things to obtain common sense information that people just seem to know without ever saying — that cars often are found on roads, that buildings tend to be vertical and that ducks look sort of like geese,” reports TG Daily. “Based on text references, it might seem that the color associated with sheep is black, but people — and NEIL — nevertheless know that sheep typically are white.”

“Images are the best way to learn visual properties,” explains Abhinav Gupta, assistant research professor in Carnegie Mellon’s Robotics Institute. “Images also include a lot of common sense information about the world. People learn this by themselves and, with NEIL, we hope that computers will do so as well.”

A cluster of computers has been running the NEIL program since late July and has successfully analyzed three million images, identified 1,500 types of objects in half a million images and 1,200 types of scenes in hundreds of thousands of images. It has also learned 2,500 associations from thousands of instances.

The NEIL research team, which includes Xinlei Chen, a PhD student in CMU’s Language Technologies Institute, and Abhinav Shrivastava, a PhD student in robotics, will present their findings on December 4 at the IEEE International Conference on Computer Vision in Sydney, Australia.

“One motivation for the NEIL project is to create the world’s largest visual structured knowledge base, where objects, scenes, actions, attributes and contextual relationships are labeled and catalogued,” explains TG Daily.

“What we have learned in the last 5-10 years of computer vision research is that the more data you have, the better computer vision becomes,” Gupta said.

Other projects, such as ImageNet and Visipedia, have attempted to compile this data with human assistance, but because the data is so extensive, it is more effective to find a way for the computer to do it.

However, Shrivastava explains that human involvement is necessary because NEIL can sometimes make erroneous claims.

“People don’t always know how or what to teach computers,” he observed. “But humans are good at telling computers when they are wrong.”

NEIL is computationally intensive, running on two clusters of computers that include 200 processing cores.

No Comments Yet

You can be the first to comment!

Leave a comment

You must be logged in to post a comment.